The $1 Trillion Signal That Was Sitting on a Job Board

The signal is at Step 1. The market reacts at Step 5. By then, the capability has been baked in for months.

On April 9th, 2026, software stocks crashed again. Cloudflare plunged 12%. Snowflake dropped 9%. ServiceNow fell 7%. The software sector had now lost over $2 trillion in market value since the correction began, with cybersecurity hit hardest. The catalyst for this latest wave: Anthropic’s Claude Mythos Preview had found thousands of zero-day vulnerabilities across every major operating system and browser, autonomously.

Pundits called it a black swan. Analysts scrambled to update price targets. CISOs fielded panicked board emails.

But this wasn’t a surprise. The signal was visible months earlier. It was sitting on a job board.

The Screenshot

Earlier this year, I screenshotted Mercor - an AI hiring platform. If you haven’t heard of them, you should have. Mercor is a $10 billion company that went from $2B to $10B valuation in eight months. They manage over 30,000 contractors who get paid $1.5 million per day collectively. Their entire business is connecting AI labs with domain experts to generate training data. They are one of the fastest-growing startups in the world, and their customers are a handful of frontier AI labs.

When Mercor’s job board shifts, it’s not a staffing blip. It’s a direct readout of what the labs are building next.

I’d searched one word: “cyber.”

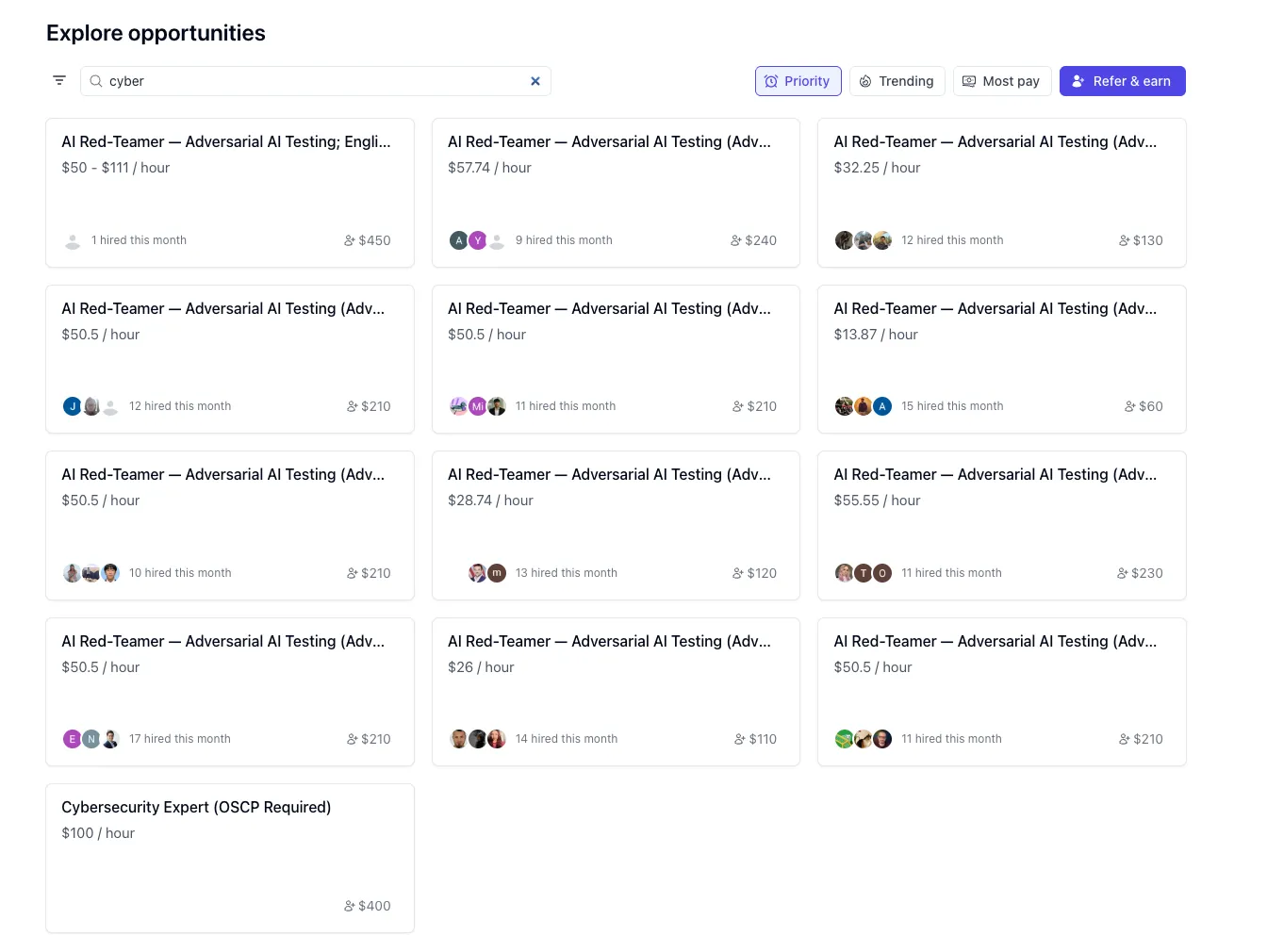

Mercor’s job board. Searching “cyber” returns a wall of adversarial AI testing roles.

Mercor’s job board. Searching “cyber” returns a wall of adversarial AI testing roles.

The results were a wall of identical listings: “AI Red-Teamer - Adversarial AI Testing.” Thirteen simultaneous postings. Over 100 people hired that month across the roles. Pay ranged from $14 to $111 per hour, with most clustering around $50. At the bottom, a “Cybersecurity Expert (OSCP Required)” role at $100/hour.

This wasn’t a typical staffing pattern. This was industrial-scale procurement of a very specific kind of expertise: people who can think adversarially about software and articulate what they find.

At the time, it was a curiosity. In hindsight, it was a roadmap.

Follow the Training Data

To understand why a job board predicts frontier AI capabilities, you need to understand how these models are actually built.

Frontier models start with pre-training - learning from massive text corpora to build general capabilities. But what turns a generally capable base model into one that’s specifically excellent at, say, cybersecurity? That’s post-training.

The key post-training recipes right now are RLHF (Reinforcement Learning from Human Feedback) and RLVR (Reinforcement Learning with Verifiable Rewards). RLHF works by showing a model two outputs and having a human expert rank which one is better. RLVR works by having experts generate problems with verifiable solutions, then training the model to produce correct answers.

Both share a critical dependency: they need high-quality data generated by domain experts. The base model gives you general intelligence. The post-training data is what shapes it into a specialist.

This means that when an AI lab wants to push a model’s capability in a specific domain, the first thing that happens - months before any announcement - is a hiring surge for the domain experts who can generate that post-training data.

That Mercor job board was the hiring surge for cybersecurity.

And that’s exactly what played out. The job board filled up with adversarial AI testing roles. Weeks later, Anthropic previewed a security scanning tool. Then Mythos leaked. Then the official announcement. Then Project Glasswing. Then the selloff. Each step of the supply chain, playing out in order.

How to Read the Tea Leaves

The pattern here is general, not specific to Mythos. Every frontier capability follows the same supply chain:

1. Hiring surge for domain experts - The labs need training data. They contract domain experts through platforms like Mercor, Scale AI, Surge AI, and others. The volume and specificity of hiring tells you which capability is being built.

2. Training data generation - Those experts spend weeks or months generating adversarial examples, ranking model outputs, and constructing verifiable problems. This is the actual bottleneck in frontier model development - not compute, not architecture.

3. Model training - The lab trains or fine-tunes the model on this data. This takes weeks to months depending on the scale.

4. Internal evaluation and limited preview - The lab tests the model, sometimes leaking capabilities through research previews or safety evaluations. This is where the Mythos leak came from.

5. Public announcement - The keynote, the blog post, the benchmark charts. By this point, the capability has been baked in for months.

If you’re watching step 5 for signal, you’re last. If you’re watching step 1, you’re months ahead.

What the Supply Chain Tells Us Now

The job board is a proxy for the AI roadmap. When you see a platform suddenly hiring hundreds of experts in a specific domain, that domain is about to get a capability leap.

Cybersecurity was the obvious one in early 2026. But the same logic applies everywhere. If you suddenly see a hiring surge for clinical pharmacologists doing “AI evaluation” work, expect a model that’s dramatically better at drug interaction reasoning within 6-12 months. If you see a spike in hiring financial auditors for “adversarial testing,” expect a model that can audit financial statements.

The frontier isn’t mysterious. It’s just upstream.

The Window Is Compressing

The gap between the job board filling up and the market selloff was weeks, not years. The next cycle will be shorter. The labs are getting faster at turning training data into capability. The gap between “domain experts are being hired” and “the model can do what those experts do” is compressing.

And the signal is readable. You don’t need insider access or leaked memos. Watch the training data supply chain - the hiring platforms, the contractor networks, the “AI evaluation” job descriptions. When a domain becomes a hiring target, that domain is about to get disrupted.

The trillion-dollar question was never “will AI disrupt your industry?” It was “when?” And the answer was on a job board, months before anyone thought to look.